In the rapidly evolving field of humanoid robotics, one persistent challenge has been translating algorithms trained in virtual environments to real-world performance. Robots that excel in controlled simulations often falter when faced with unpredictable terrain, lighting, and environmental variability. This problem, known as the “reality gap,” has historically slowed the deployment of advanced robotics. However, the emergence of photorealistic simulation combined with domain randomization—collectively forming what some call “Sim-to-Real 2.0”—is radically reshaping how humanoids are trained and deployed. By creating virtual environments that are almost indistinguishable from reality and systematically varying simulation parameters, engineers are dramatically reducing the need for expensive and time-consuming real-world trials. This article explores the technology, techniques, and implications of this next-generation approach.

Introduction: The Promise of Next-Generation Simulation

Traditional simulation environments, while useful for testing basic locomotion or manipulation strategies, often fail to capture the subtle complexities of the real world. Variations in lighting, friction, textures, sensor noise, and unexpected obstacles can cause robots to fail when deployed outside the lab.

Sim-to-Real 2.0 addresses these limitations by combining:

- Photorealistic Rendering

- High-fidelity visuals and physics engines mimic real-world appearance and dynamics, allowing AI models to experience realistic sensory input during training.

- Domain Randomization

- Rather than perfect replication, simulations introduce systematic variations in parameters such as surface textures, lighting conditions, object positions, and friction coefficients.

- This ensures that trained policies are robust and adaptable to real-world variations.

By training in such environments, humanoid robots can learn generalizable behaviors that perform reliably outside the simulation, reducing dependence on trial-and-error in physical spaces.

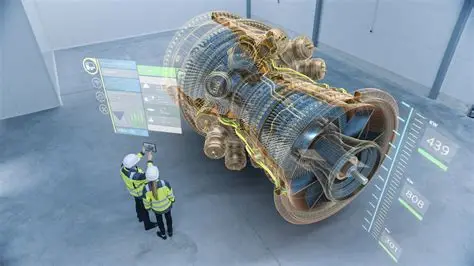

The Tech Stack: Platforms Powering Advanced Simulations

Several simulation platforms have emerged as leaders in photorealistic, physics-rich virtual training:

- NVIDIA Isaac Sim

- Built on the Omniverse platform, Isaac Sim integrates real-time ray tracing and advanced physics.

- Supports high-fidelity modeling of humanoid robots, sensors, and dynamic environments.

- Enables multi-robot simulations, allowing teams of humanoids to learn coordinated behaviors.

- Unity

- A flexible game engine widely used in robotics research for visual realism and rapid prototyping.

- Unity’s ML-Agents toolkit allows for reinforcement learning in highly varied virtual scenarios.

- Supports integration with robotic middleware and real-world hardware.

- Mujoco and PyBullet

- Physics-first platforms focusing on dynamic fidelity and control optimization.

- Often paired with photorealistic renderers to create hybrid visual and physical simulations.

- Custom Engines

- Some organizations develop proprietary engines tailored to specific sensor suites, including LiDAR, stereo cameras, and tactile feedback systems.

These platforms collectively enable engineers to train complex humanoid behaviors—walking, climbing stairs, manipulating objects—entirely in simulation before ever stepping foot in a real-world environment.

Domain Randomization: Building Robustness Through Variation

Domain randomization is a critical technique in bridging the reality gap. Its core principle is deceptively simple: expose the robot to as many variations of the simulated world as possible, so it learns to generalize.

Key Strategies Include:

- Visual Randomization

- Varying lighting, shadows, reflections, textures, and object colors to prevent overfitting to idealized visuals.

- Physical Randomization

- Altering friction coefficients, object masses, and surface inclines to teach the robot to adapt to different dynamics.

- Sensor Noise Simulation

- Adding realistic noise to cameras, IMUs, and force sensors, mimicking the imperfections found in real hardware.

- Environmental Variation

- Random placement of obstacles, moving platforms, and uneven terrain ensures that trained policies remain robust across unpredictable scenarios.

By embracing randomness, robots trained in simulation are far less brittle when confronted with the variability of the real world, dramatically reducing deployment risk and retraining needs.

Quantifying the Reality Gap Reduction

Data from recent studies indicates that Sim-to-Real 2.0 has transformed training efficiency:

- Reduction in Real-World Training Time

- Some robotics teams report up to 70–80% fewer hours spent on real-world trial and error, relying instead on virtual testing.

- Improved Policy Robustness

- Robots trained with photorealistic simulations and domain randomization exhibit higher success rates in complex tasks, such as object manipulation, stair climbing, and obstacle navigation.

- Accelerated Iteration Cycles

- Rapid simulation allows for quick testing of algorithmic changes, parameter adjustments, and novel sensor integrations without risking hardware damage.

These outcomes indicate that simulation is no longer just a testing tool; it is becoming a primary method for skill acquisition in humanoid robotics.

Implications for Humanoid Development

The convergence of photorealism and domain randomization impacts robotics across multiple dimensions:

- Faster Deployment

- Policies trained in virtual environments can be deployed in real-world applications with minimal fine-tuning.

- Cost Reduction

- Reduces wear and tear on expensive robots and lowers the need for large-scale physical test setups.

- Improved Safety

- Complex behaviors, including risky maneuvers or human interaction tasks, can be tested virtually before physical deployment.

- Enhanced AI Training

- Reinforcement learning and imitation learning algorithms benefit from massive, accelerated training datasets generated entirely in simulation.

- Cross-Platform Applicability

- Simulation-trained policies can transfer between robot models with similar kinematics, enabling more modular humanoid software development.

As a result, Sim-to-Real 2.0 is helping close the gap between research prototypes and production-ready humanoids.

Call to Action

For robotics engineers, researchers, and enthusiasts, understanding the tools and techniques of Sim-to-Real 2.0 is critical. Access our curated list of the top 5 simulation platforms for humanoid development, explore their capabilities, and begin training robots in photorealistic, randomized environments that bring virtual learning closer to reality than ever before.